Elon Musk’s Legal Battle Over OpenAI: What’s Really at Stake?

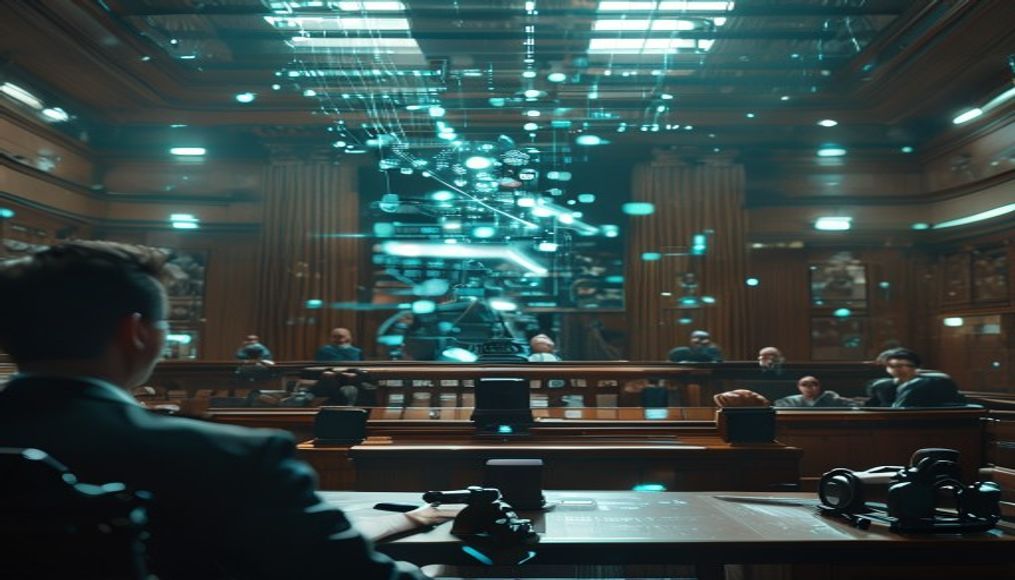

Imagine the two biggest visionaries shaping AI — Elon Musk and Sam Altman — in a courtroom fight over the future of OpenAI, a company whose work powers tools you probably use every day. This isn’t just a boardroom spat; it could decide the fate of AI innovation worldwide and whether OpenAI can continue running as a for-profit business. The stakes? Higher than you might expect.

—

Key Takeaways

- Elon Musk and Sam Altman’s legal dispute challenges OpenAI’s corporate structure before its IPO.

- The case may redefine how AI companies balance profit goals with ethical responsibilities.

- OpenAI’s survival as a for-profit entity is on the line, impacting AI’s commercial future.

- This legal drama reflects broader tech industry debates about AI governance and transparency.

—

The Full Story

Earlier this year, an unexpected legal showdown emerged between Elon Musk, one of OpenAI’s early backers, and Sam Altman, OpenAI’s CEO. The core conflict? Whether OpenAI can remain a for-profit enterprise, especially as it prepares for a massive public stock offering. Musk alleges that the company’s shift away from its original nonprofit roots has strayed too far from its mission, potentially prioritizing money over responsible AI development.

OpenAI started as a research collective with ethics at its core, promising not to pursue profit over safety. But the AI gold rush and rapid advancements have complicated that vision. OpenAI’s transition to a “capped-profit” model aimed to attract huge investments needed for training advanced models — some requiring billions in GPU hours alone. According to a report from the International Energy Agency (IEA), training some of today’s top AI models can consume as much energy as 100 U.S. households annually, reflecting the immense scale of resources involved.

What’s not always clear from public statements is that this court case has echoes reaching far beyond shareholder disputes. It darkens the long-term trust between investors, researchers, and regulators around how to develop AI safely. Should a private company driven to answer to Wall Street control technology that will reshape global economies and societies? Musk’s lawsuit spins this dilemma into a very public drama.

The Bigger Picture

This legal battle exemplifies a growing tension within the AI sector: commercial success versus ethical governance. In the last six months, we’ve seen related tremors:

- Google’s DeepMind restructuring itself to better align research with Alphabet’s commercial interests.

- OpenAI introducing GPT-4 Turbo, balancing open research with proprietary control.

- China pushing new regulations on AI to prevent monopolies while promoting innovation.

Think of this as a neighborhood dispute over who owns the streetlight that everyone depends on. If one homeowner decides to charge for light usage, does it become a community asset or a private toll booth? Musk’s case forces a fundamental question: can AI remain a common good if it’s also a product for profit?

The outcome here might set legal precedents for AI governance globally. With governments worldwide hashing out AI policies, courts taking a stance on corporate AI structures could influence regulation—legal rulings often outpace legislative processes.

Real-World Example: How This Hits a Small Business

Sarah owns a small digital marketing agency in Austin with a dozen employees. Over the past year, she’s relied heavily on OpenAI’s tools to generate campaign ideas, optimize copy, and automate client reporting. The cheaper, more accessible APIs allow her to compete against agencies with much larger staffs.

If Musk’s legal challenge forces OpenAI to revert to a nonprofit or limits investment, Sarah’s costs could skyrocket. OpenAI might have to cut back services or raise prices sharply to sustain costly AI research. Suddenly, the tools that level the playing field become luxuries only big firms can afford.

For Sarah, it’s a reminder that innovation is tied not just to tech breakthroughs but also to who controls these platforms—and how they’re governed.

The Controversy or Catch

Critics argue Musk’s intervention is a power play cloaked in ethics. Some industry insiders suggest he wants to slow OpenAI’s dominance or regain influence. Others fear the lawsuit could freeze innovation, deterring investors concerned about unstable governance.

Moreover, questions remain about what “capped-profit” really means in practice. OpenAI promised investors a 100x return cap, yet details are murky, and some analysts worry the company is shifting toward traditional profit-maximizing behaviors inconsistent with its public mission.

The flip side? Without strong profit incentives, cutting-edge AI research may stall. Training models like GPT-4 costs upward of $100 million, and without a viable business model, companies might scale back essential safety testing or open research output in favor of short-term survival.

Finally, there’s a transparency challenge. How much can outsiders learn about internal disputes or real governance decisions in a private company? Legal battles often drag on in secrecy, leaving stakeholders uncertain about the company’s future.

What This Means For You

Whether you’re a tech investor, entrepreneur, or just a user of AI-driven products, this story impacts you. Here’s what you can do this week:

1. Review your AI service dependencies. Check whether your daily workflows rely on tools from companies like OpenAI and assess your risk if pricing or availability changes.

2. Advocate for transparency. Join industry forums or social media groups pushing for clearer disclosure from AI providers about governance and pricing.

3. Stay informed on regulatory updates. Government responses to AI’s risks are evolving rapidly—follow credible sources like McKinsey’s AI insights to anticipate shifts affecting your business.

Our Take

This isn’t just a startup quarrel. It’s a showdown over how we collectively steward a technology with unprecedented power and risk. While Elon Musk’s past track record with prediction accuracy is mixed, his concerns about AI safety are not unfounded. However, weaponizing the legal system to challenge OpenAI’s profit model risks slowing development and could scare off the necessary capital.

In our view, the best path forward balances strict independent oversight with sustainable commercial incentives. The court’s ruling should emphasize transparency and clear safeguards over dogmatic adherence to nonprofit models that no longer fit AI’s immense costs.

Closing Question

If AI companies need billions to innovate but also must protect society’s interests, who should decide the rules—private investors, CEOs like Elon Musk and Sam Altman, governments, or some new form of public oversight?

—

You Might Also Enjoy: More on PromptTalk